Blog

Engineering notes, production patterns, and guidance for building with AI agents.

Prompt Engineering for Production: Beyond 'It Worked Once'

Prompts are code. Version them, test them, review them. The production prompt engineering discipline that makes AI workflows reliable.

Read post

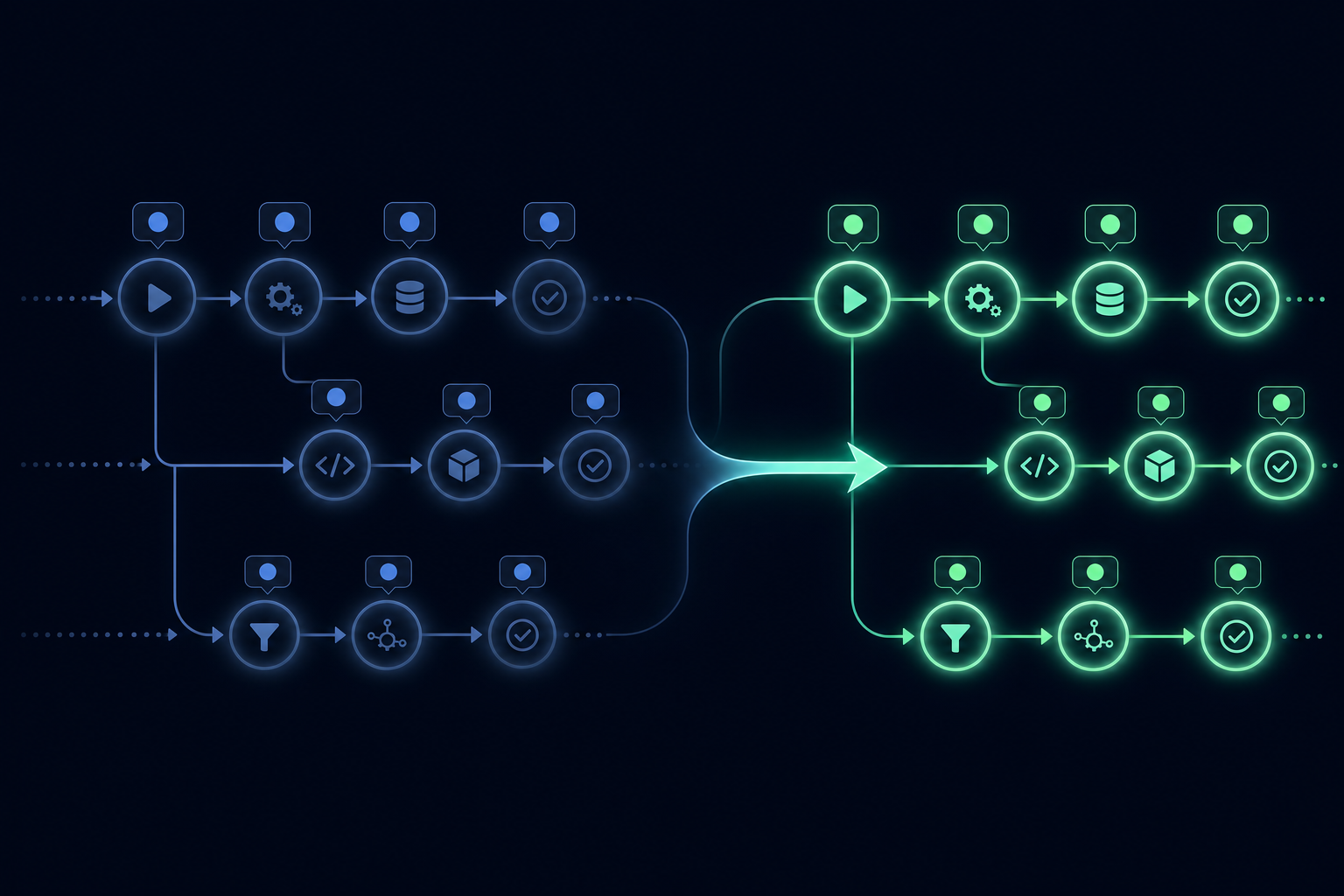

How to Build a Multi-Agent System That Actually Works

The orchestrator pattern, communication strategies, shared state, and failure isolation for multi-agent AI architectures.

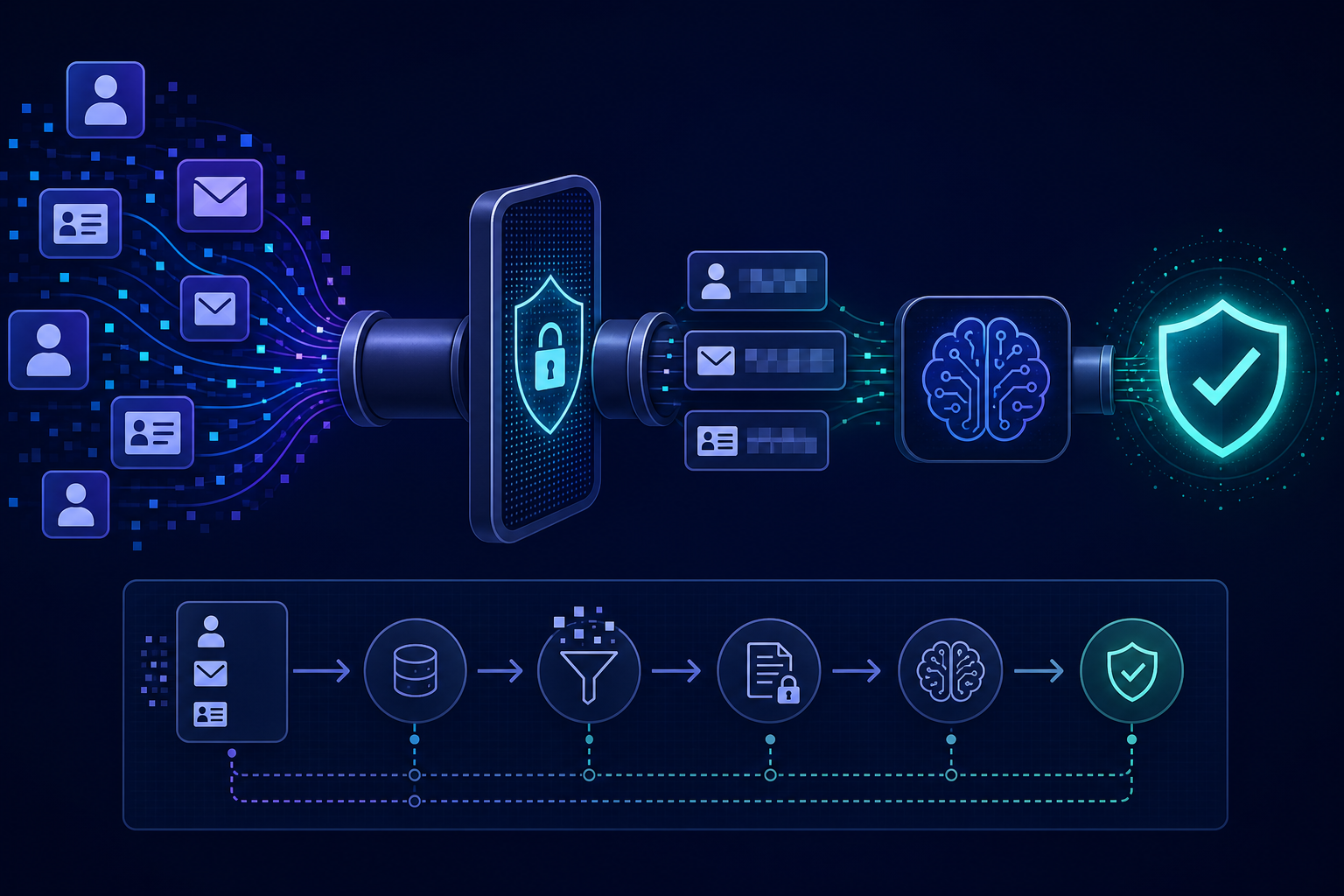

AI Agents and PII: Data Handling Patterns That Keep You Compliant

Mapping data flows, LLM provider DPAs, PII minimization in prompts, and retention policies for run state — what every AI workflow team needs to get right.

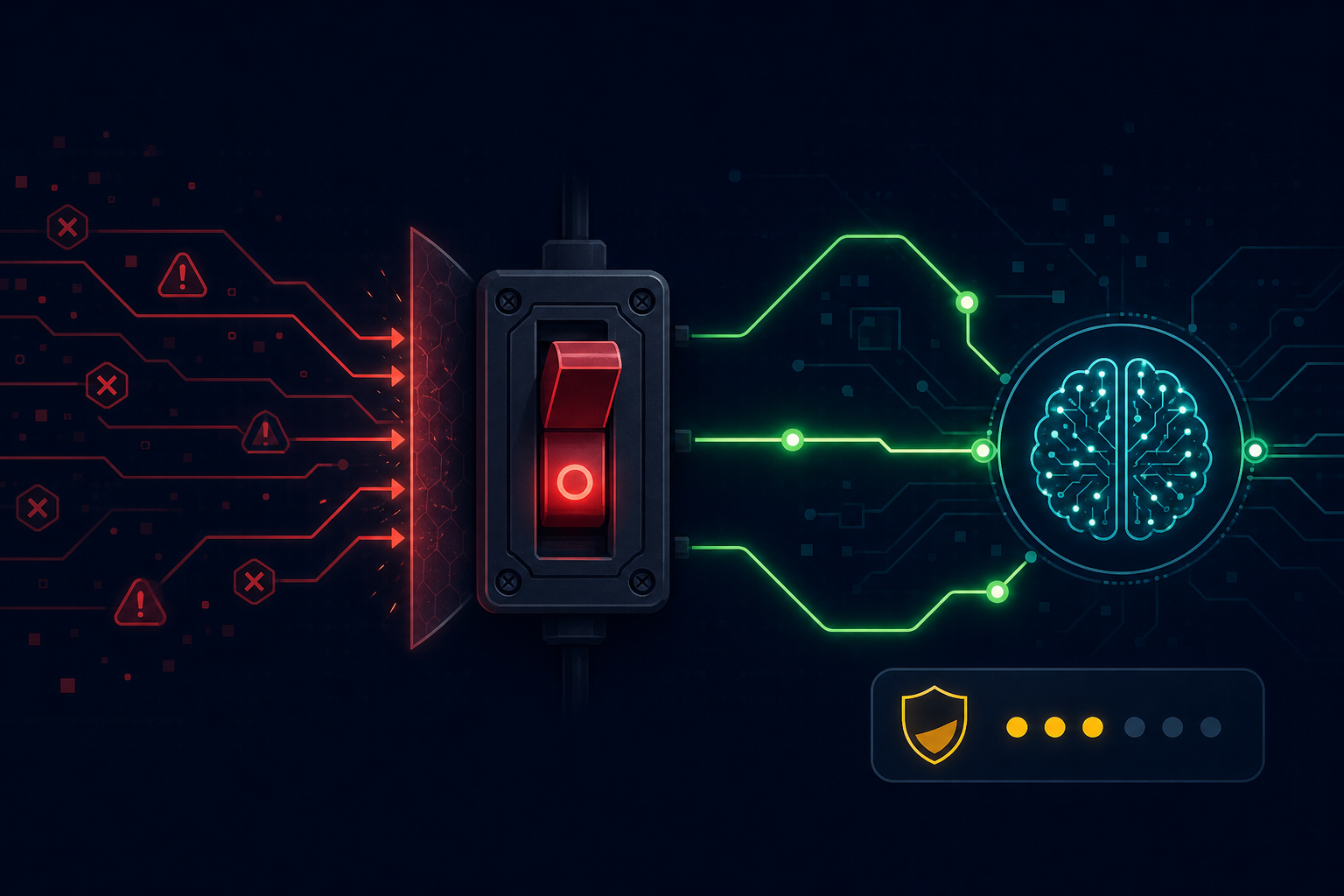

Prompt Injection Attacks: How to Defend AI Workflows

Structural separation, output validation, privilege separation, and monitoring — the four defense layers against prompt injection in production AI systems.

Zero-Downtime Deployments for AI Workflows

Drain strategies, version-aware execution, and backward-compatible migrations — how to deploy new workflow versions without losing in-flight runs.

Provider Portability: Building LLM-Agnostic AI Workflows

The abstraction layer, prompt portability, production failover, and cost arbitrage that come from not coupling tightly to a single LLM provider.

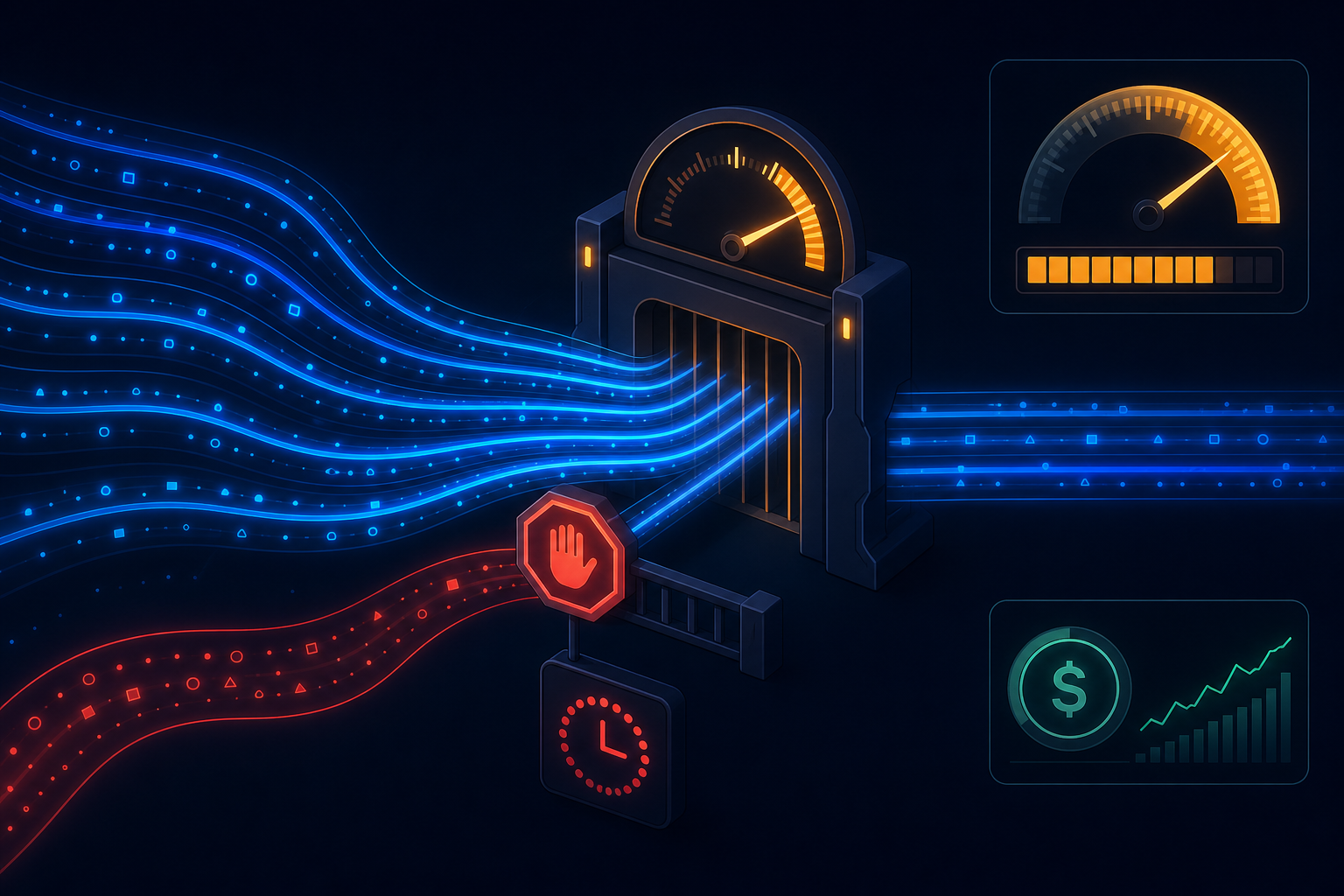

Queue Design for AI Workloads: Why Standard Patterns Need Adjustment

Cost heterogeneity, LLM rate limit back-pressure, priority queuing, fan-out management, and dead-letter observability for AI workflow queues.

Workflow Debugging: How to Find What Broke

The debugging hierarchy, step replay, structured error classification, and cross-run correlation — the observability stack that makes AI workflow debugging systematic.

Building an AI Research Assistant with AgentRuntime

Query decomposition, parallel information gathering, synthesis, and citation annotation — the four phases of a production AI research workflow.

Building an AI Invoice Processing Pipeline

Intake, extraction, PO matching, GL coding, and approval routing — how to build an AP automation pipeline that handles real-world invoice variance reliably.

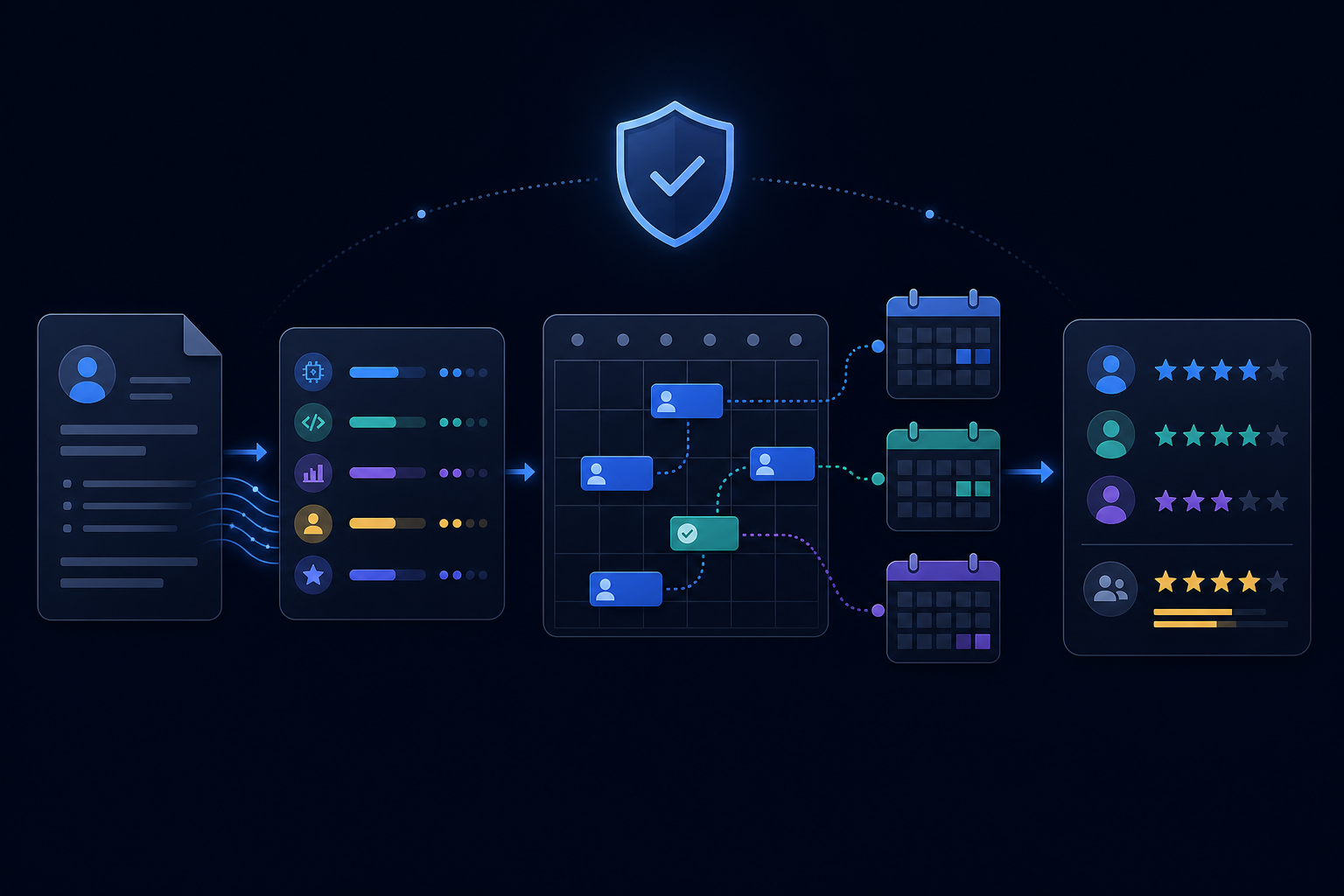

AI Agents for HR: Resume Screening and Interview Scheduling

The right way to build AI-assisted hiring workflows: scoring for human review, scheduling automation, and the compliance layer that makes it legally deployable.

AI-Powered Content Moderation: Building Systems That Scale

Layered classification, context-aware moderation, appeal workflows, and the dual error trade-off — how to build content moderation that is both scalable and fair.

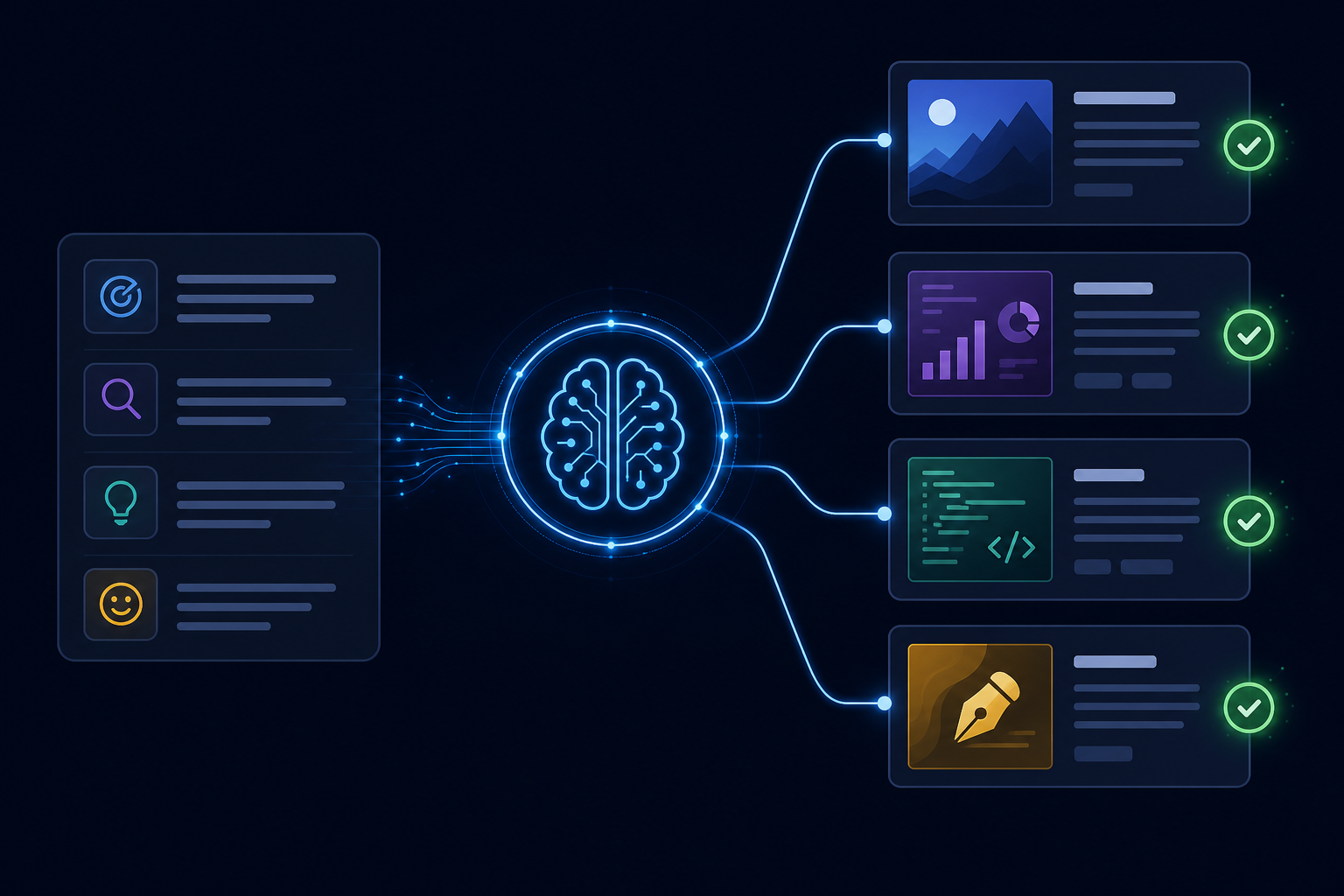

Building a Content Generation Pipeline That Maintains Quality at Scale

Brief generation, differentiation injection, quality evaluation, and brand voice enforcement — the infrastructure behind consistent AI content at volume.

AI Agents for E-Commerce: Automating Order Management

Fraud review, exception handling, customer inquiry triage, and returns processing — where AI adds value in order management workflows.

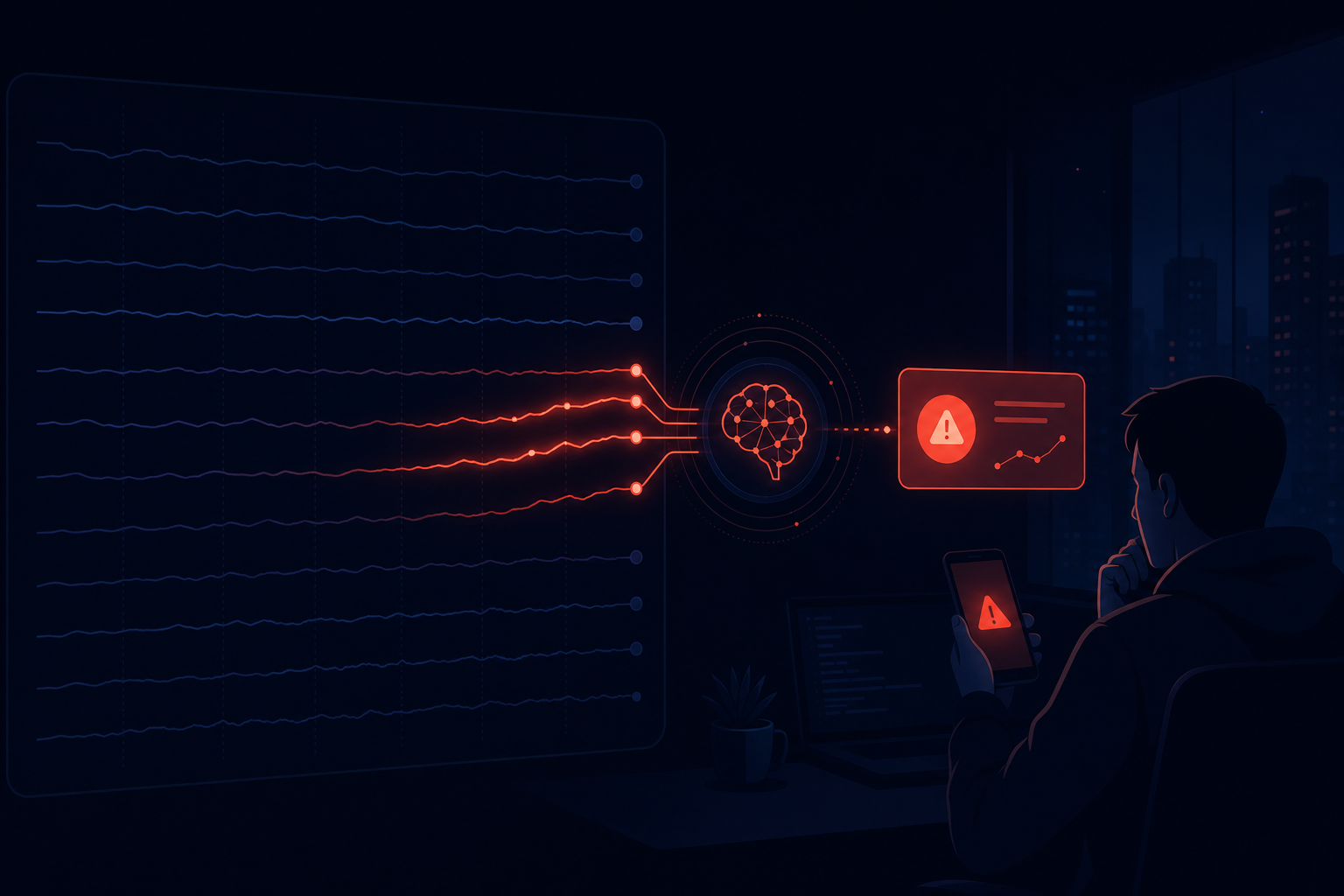

Building an AI Monitoring Pipeline: Using Agents to Watch Your Systems

Why threshold alerting misses complex incidents, and how LLM correlation analysis detects multi-signal degradation before individual metrics cross thresholds.

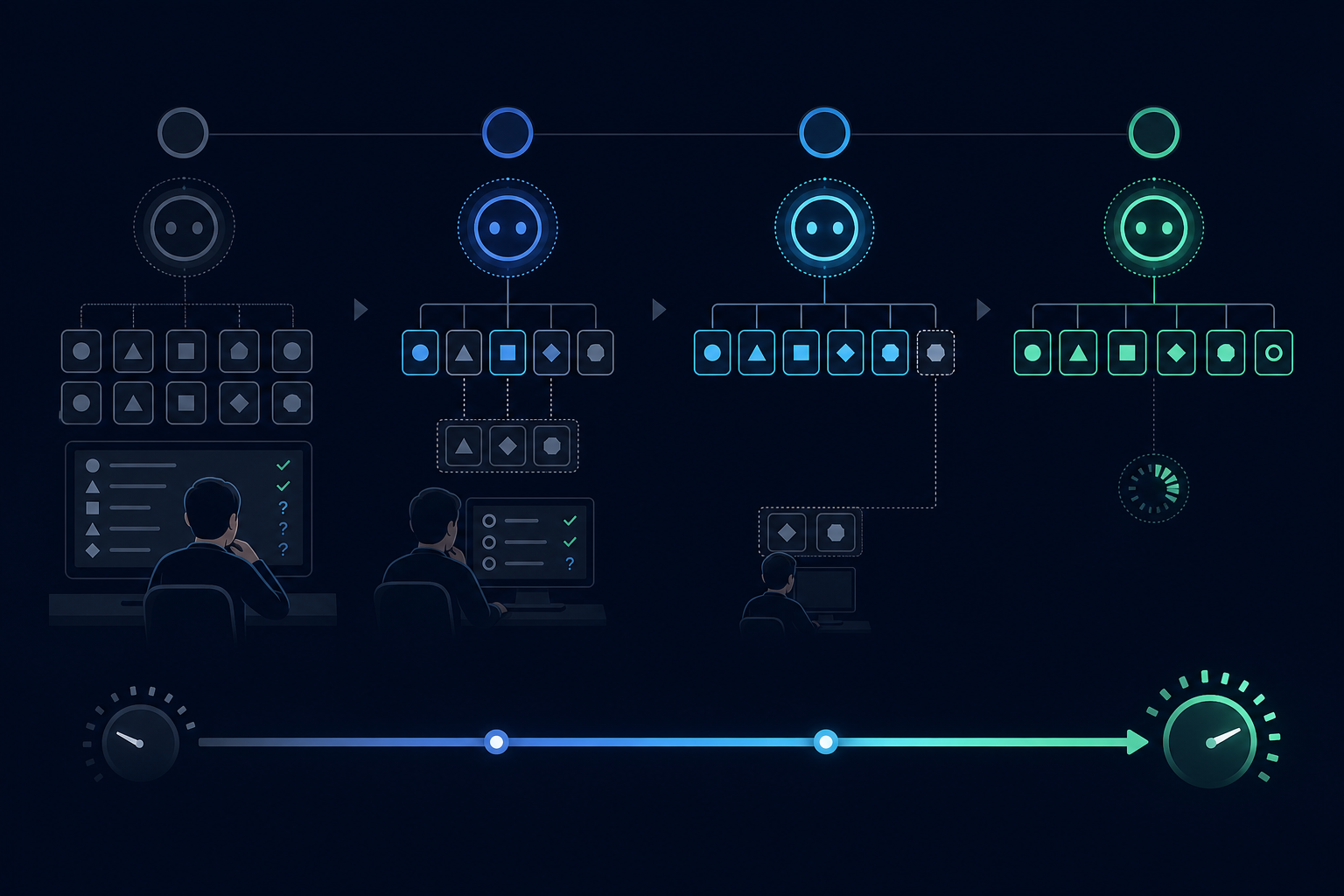

The Cold Start Problem for AI Agents: What Breaks Before You Have Data

Over-automation risk, edge case distribution gaps, shadow mode, and gradual rollout thresholds — how to reach steady-state reliability without a painful cold start.

Measuring AI Workflow ROI: The Metrics That Actually Matter

Baseline cost, quality-adjusted throughput, time-to-value, and what to do when the ROI is negative — a rigorous framework for AI investment measurement.

Why Workflow-Level Tracing Beats Function-Level Logging for AI Systems

Logging tells you what happened at a line of code. Tracing tells you what happened during an entire operation. For AI workflows, the difference is the difference between debugging and guessing.

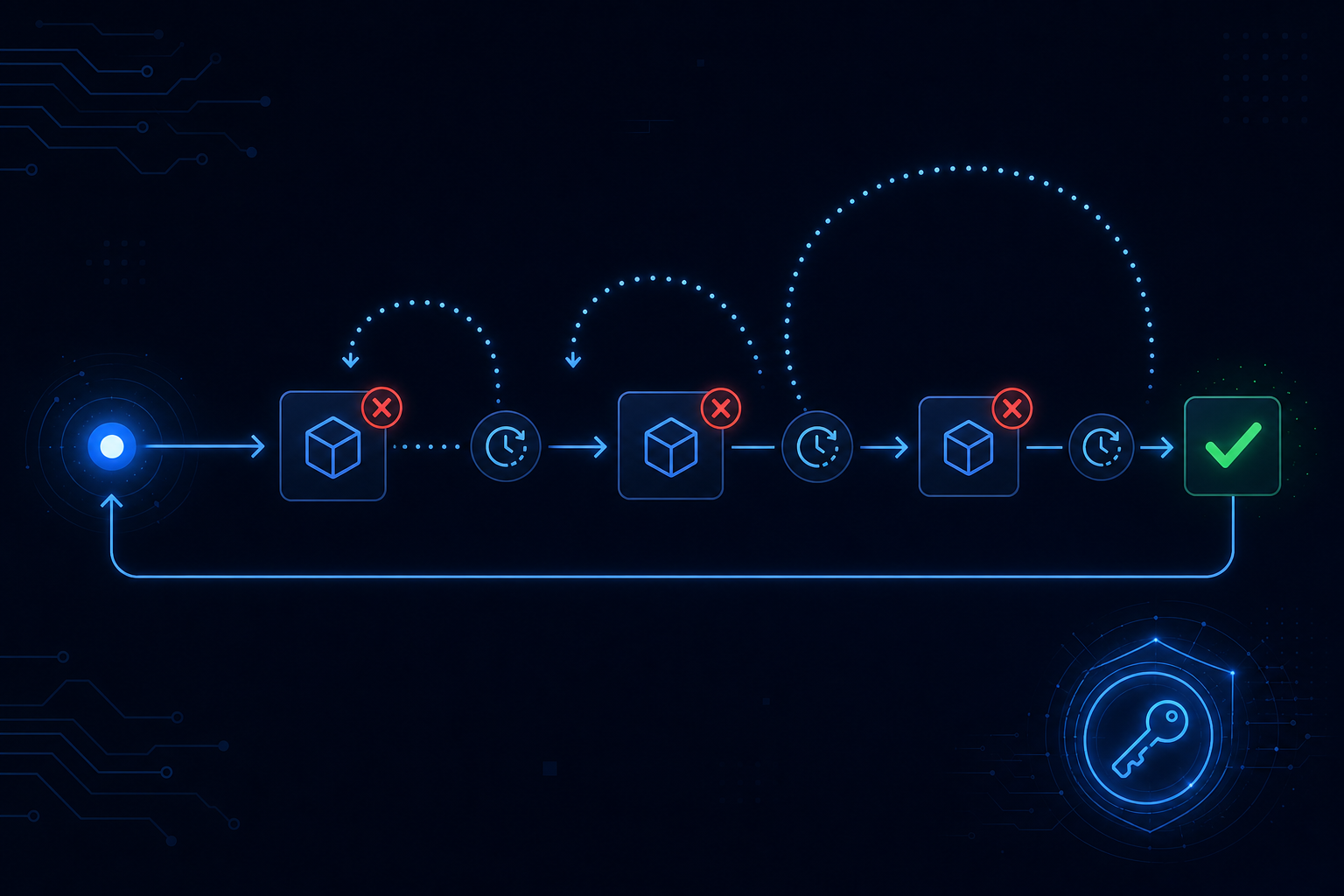

Retry Logic for AI Agents: Beyond try/catch

Why naive retries cause duplicate actions in AI workflows, and how idempotency keys, exponential backoff, and dead-letter queues make retries safe.

The Agent Memory Problem: State, Context, and Recall

Working memory, run memory, and long-term memory are three different problems. Most agents conflate them — and pay the price at scale.

Rate Limits Are Not Your Problem — Until They Are

How LLM API rate limits work, why they become production problems, and the strategies for managing cost and throughput at scale.

How to Test AI Workflows Before They Hit Production

A four-layer testing strategy for AI workflows: unit tests, mocked integration tests, snapshot tests, and evaluation harnesses.

Timeouts and Deadlines for AI Agents: Setting SLAs That Actually Hold

The difference between timeouts and deadlines, how the stuck-workflow problem emerges, and what a production timeout strategy looks like.

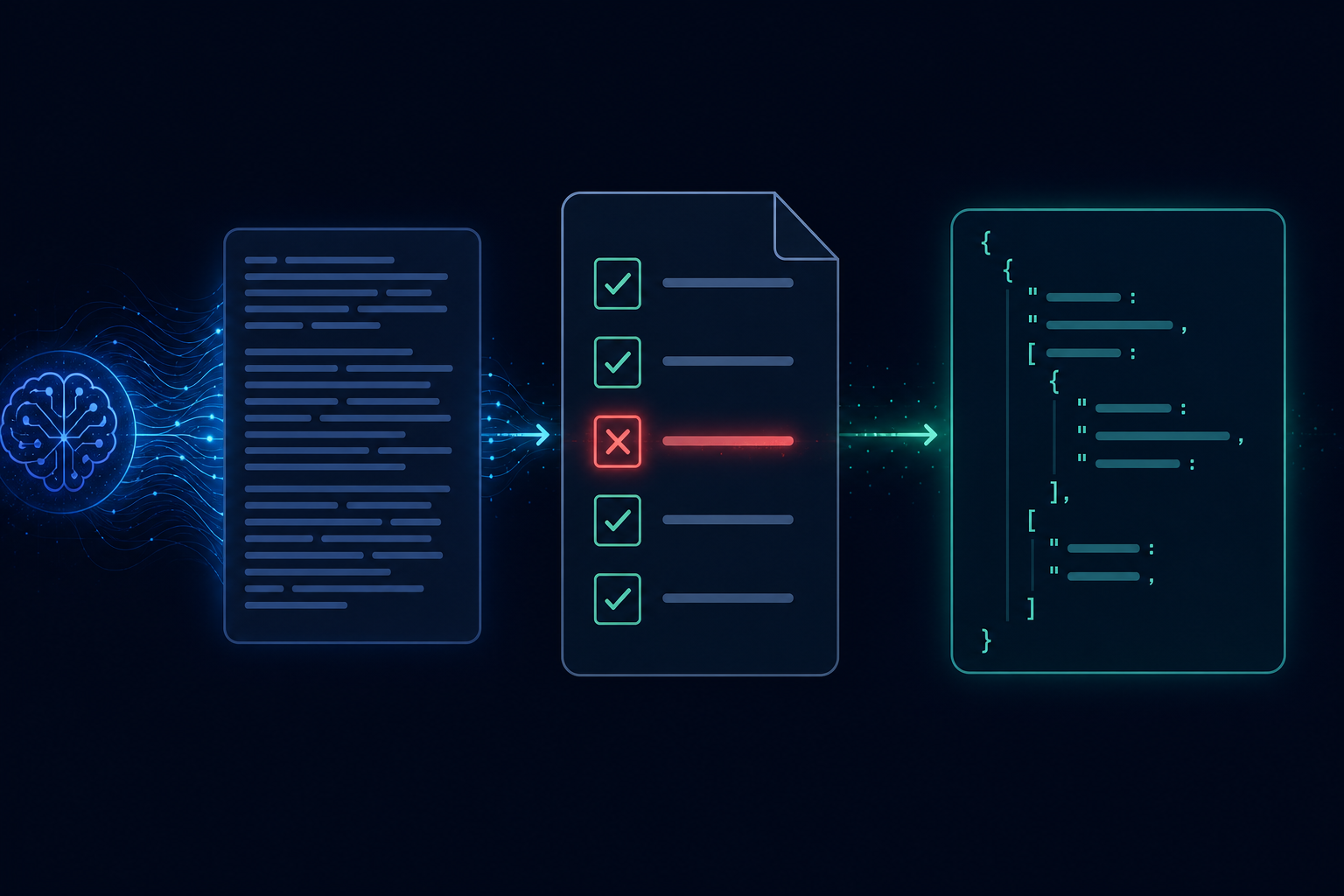

Structured Output from LLMs: Why JSON Mode Is Not Enough

JSON mode guarantees valid JSON, not correct JSON. Schema validation, structured output APIs, and retry-on-failure patterns for reliable LLM output.

From Notebook to Production: The AI Agent Deployment Gap

What a Jupyter notebook doesn't model — concurrency, partial failures, state persistence, observability — and the migration checklist for getting to production.

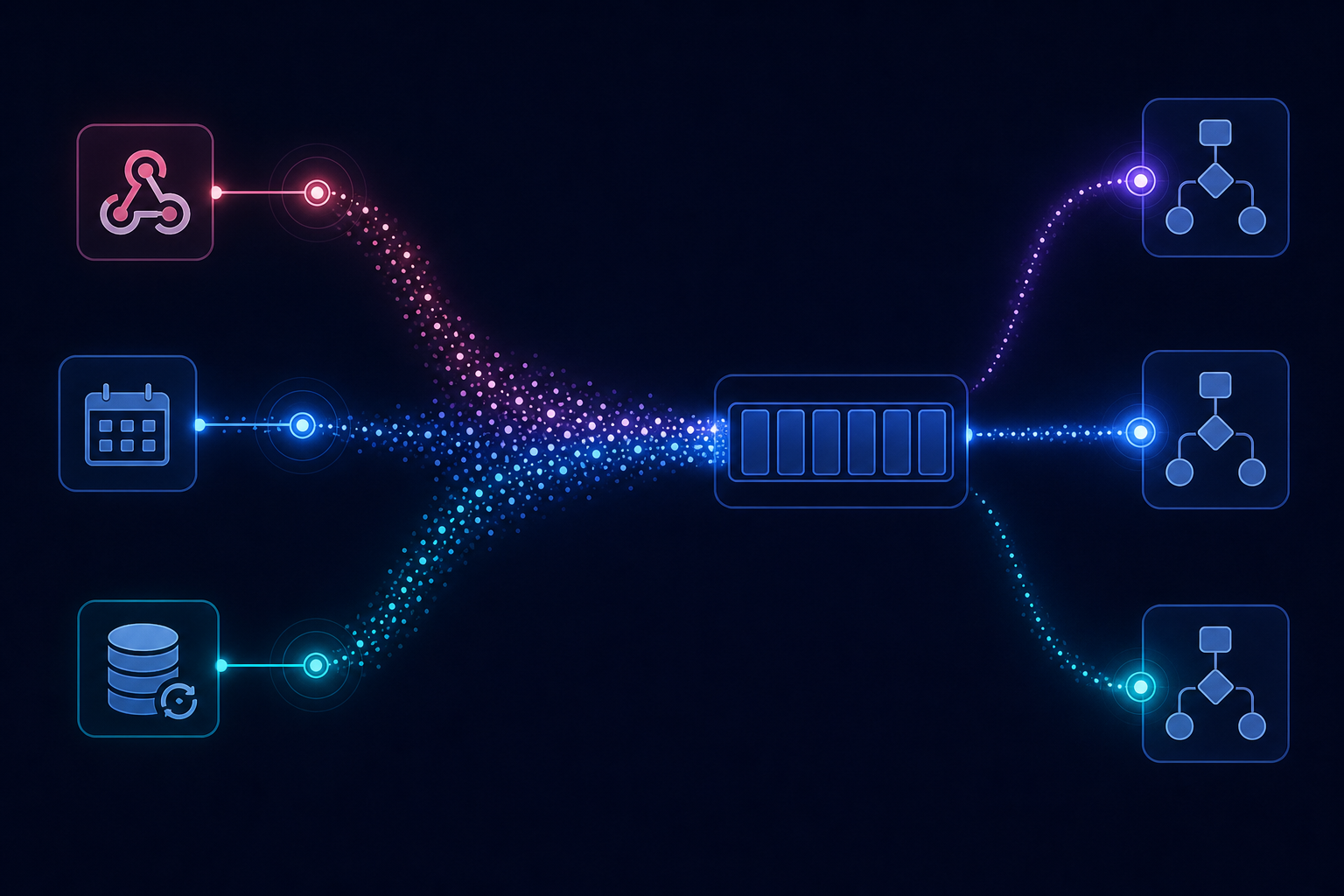

Event-Driven AI Workflows: Building Agents That React

Why polling breaks at scale, how event queues and webhooks work with AI workflows, and why idempotency is non-negotiable for event-driven systems.

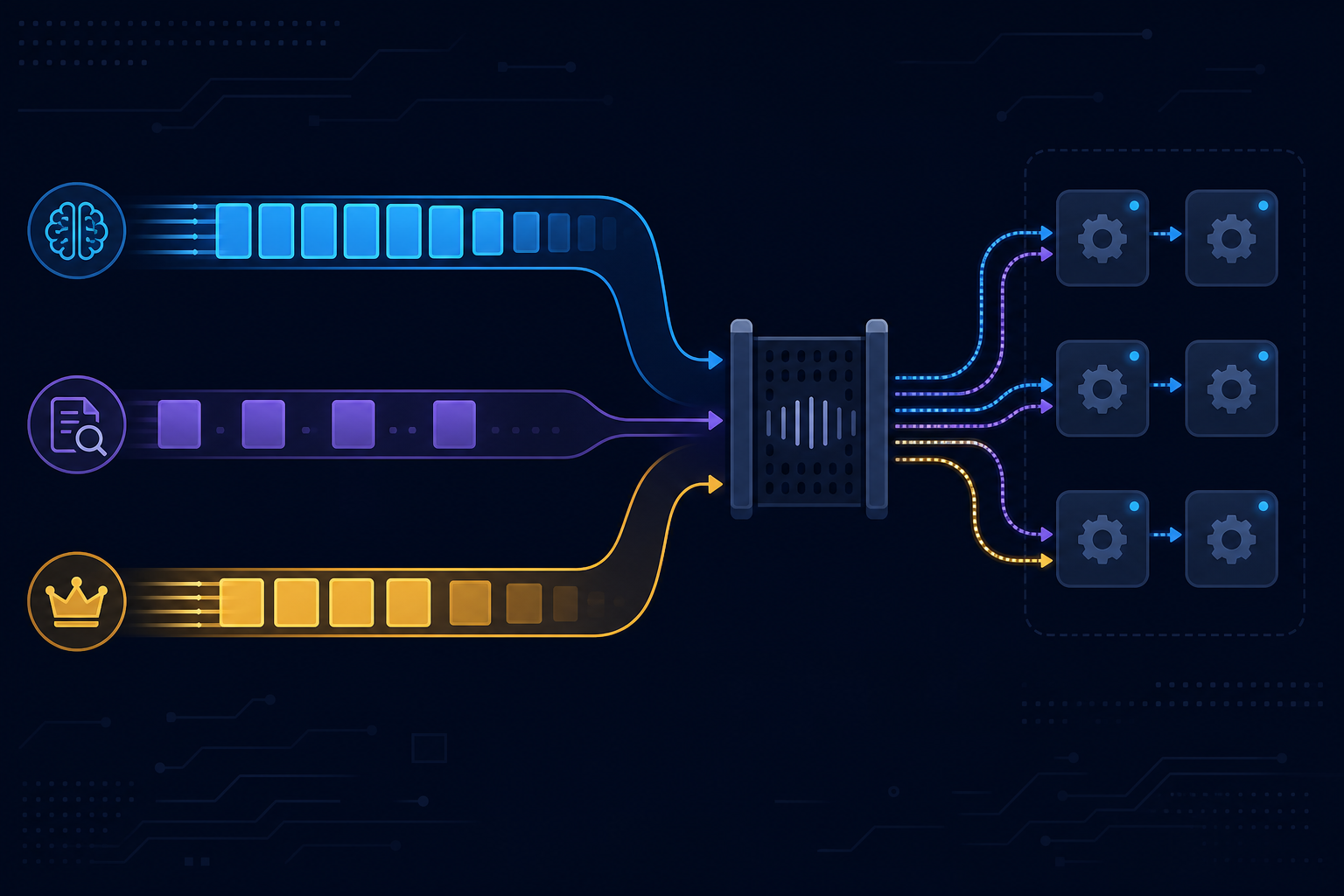

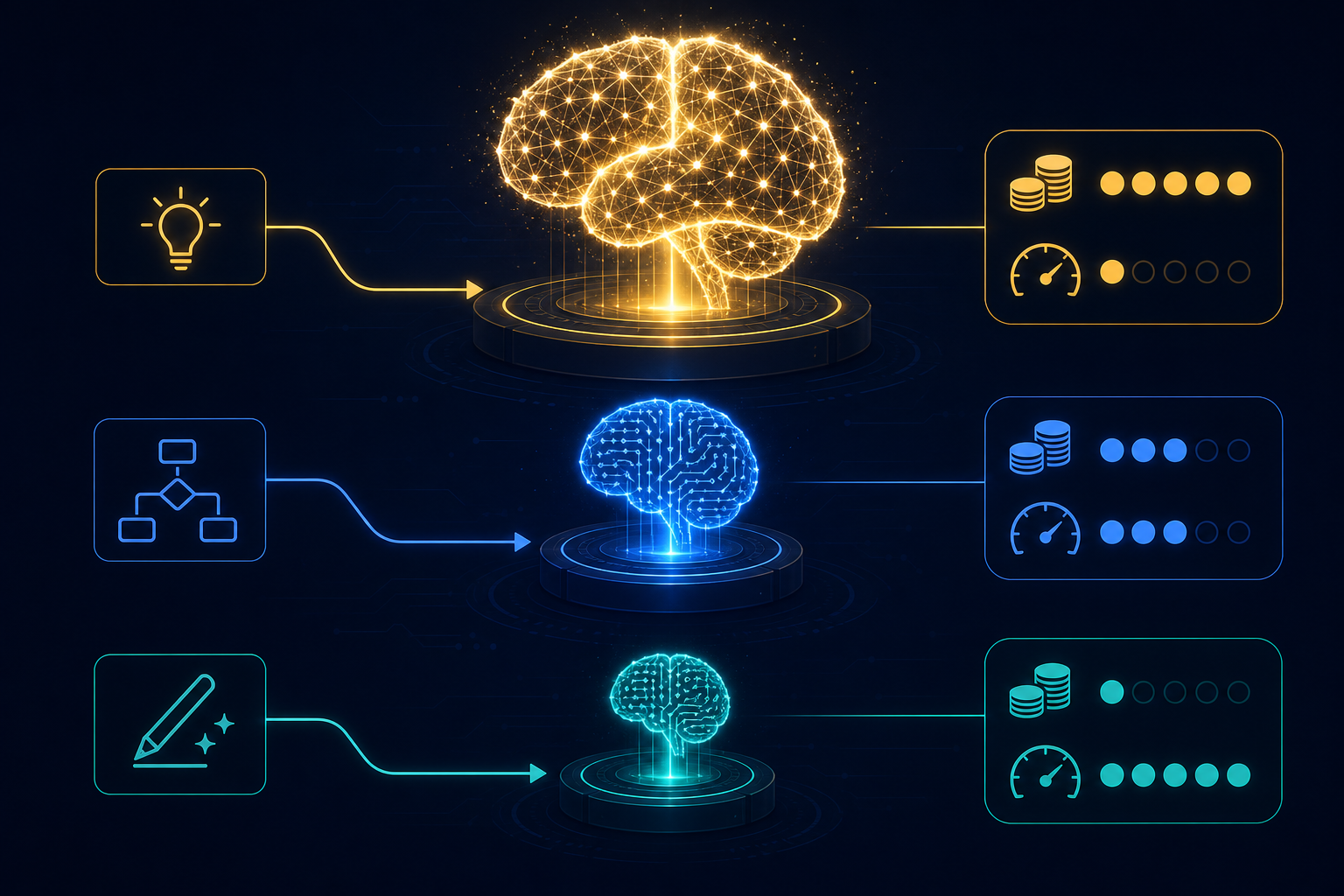

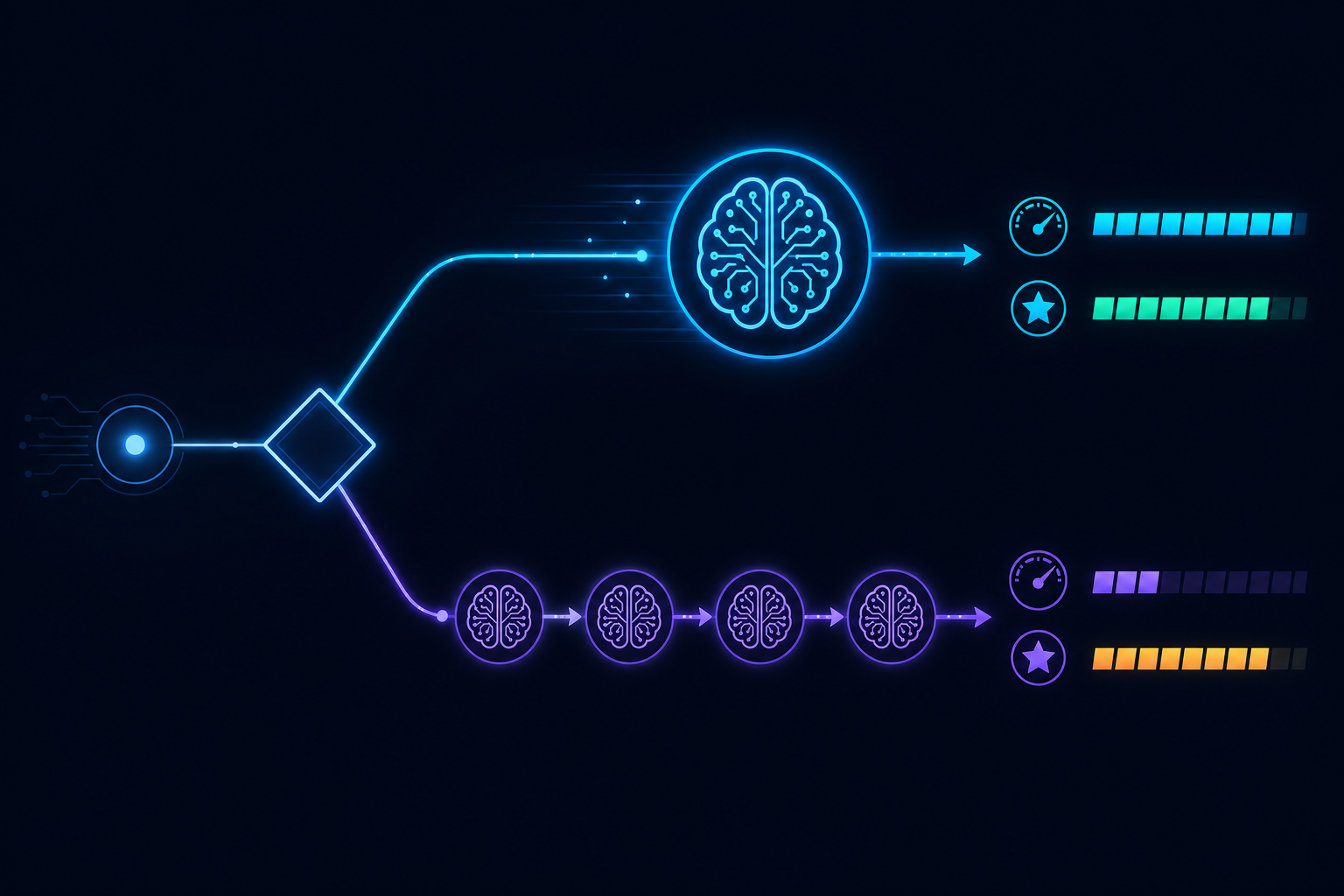

Choosing the Right LLM for Each Step in Your Workflow

A tiered model selection strategy for AI workflows: when frontier models are worth it, when they are not, and how latency changes the calculus.

Building a Lead Enrichment Pipeline with AgentRuntime

A five-stage lead enrichment workflow: intake, company research, ICP scoring, personalization signals, and CRM write-back — with the reliability patterns that make it production-ready.

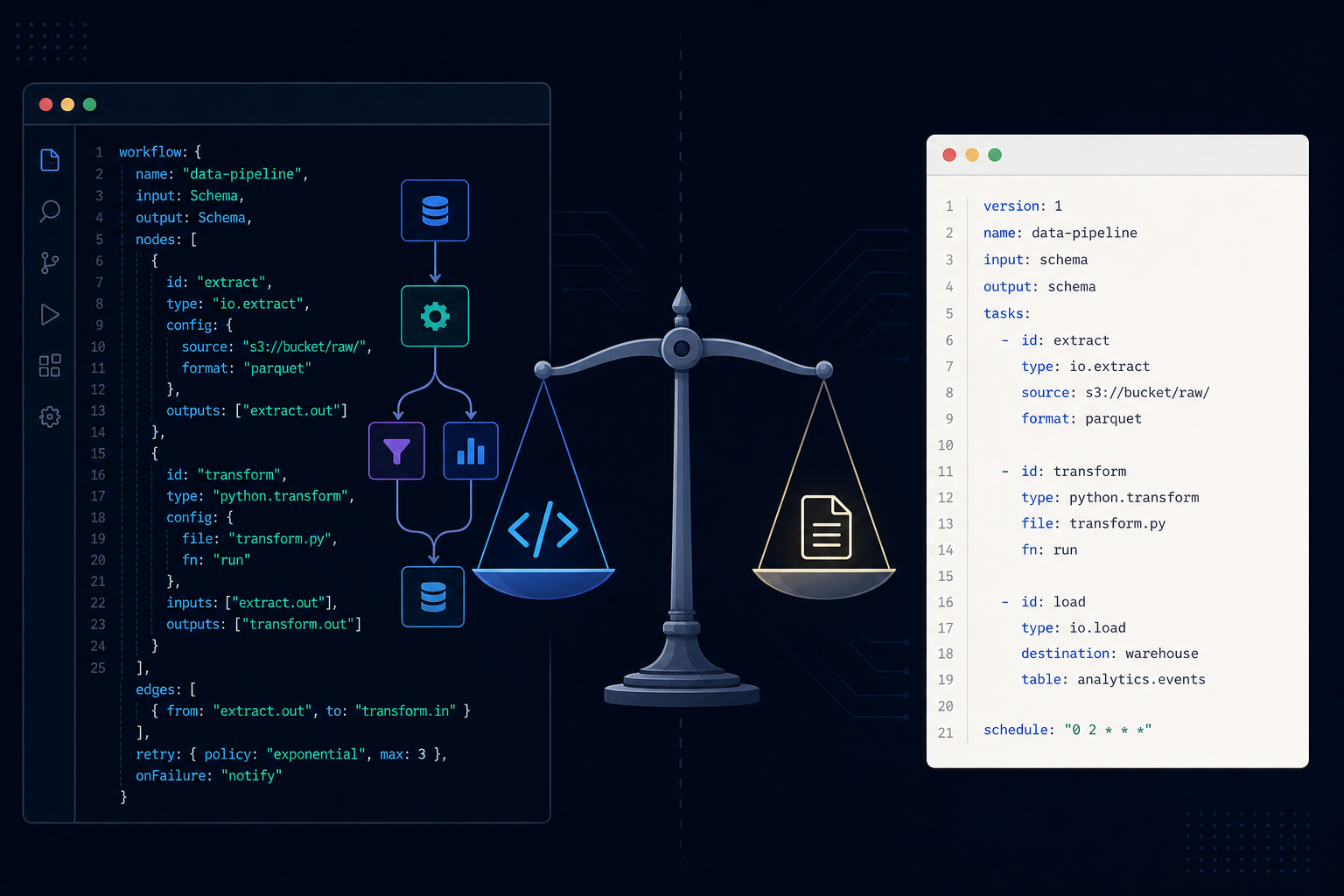

Workflow as Code vs. Workflow as Config: What the Trade-off Actually Is

YAML vs code for defining AI workflows — the genuine trade-offs, why visual-first tools are often the worst of both worlds, and how to choose.

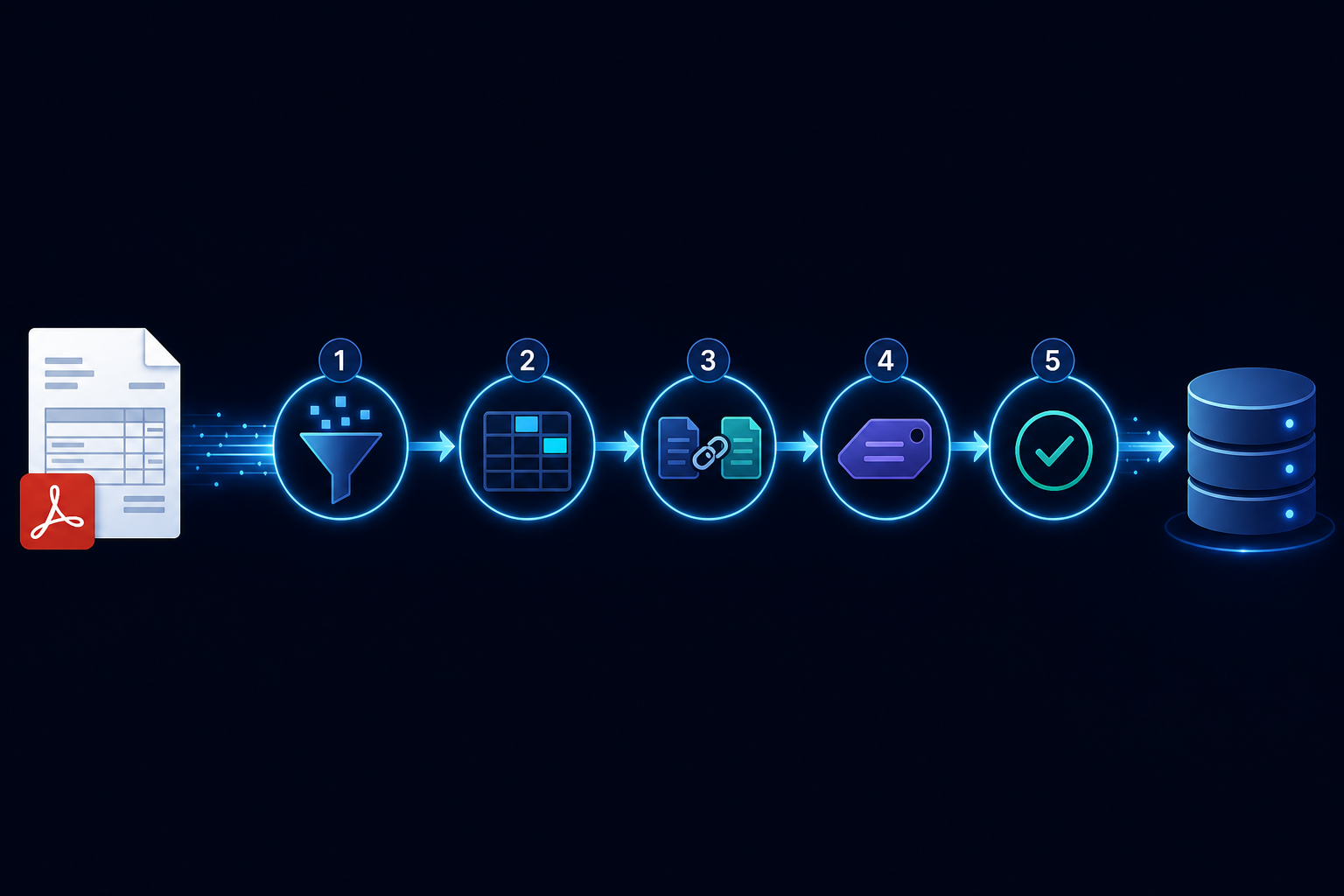

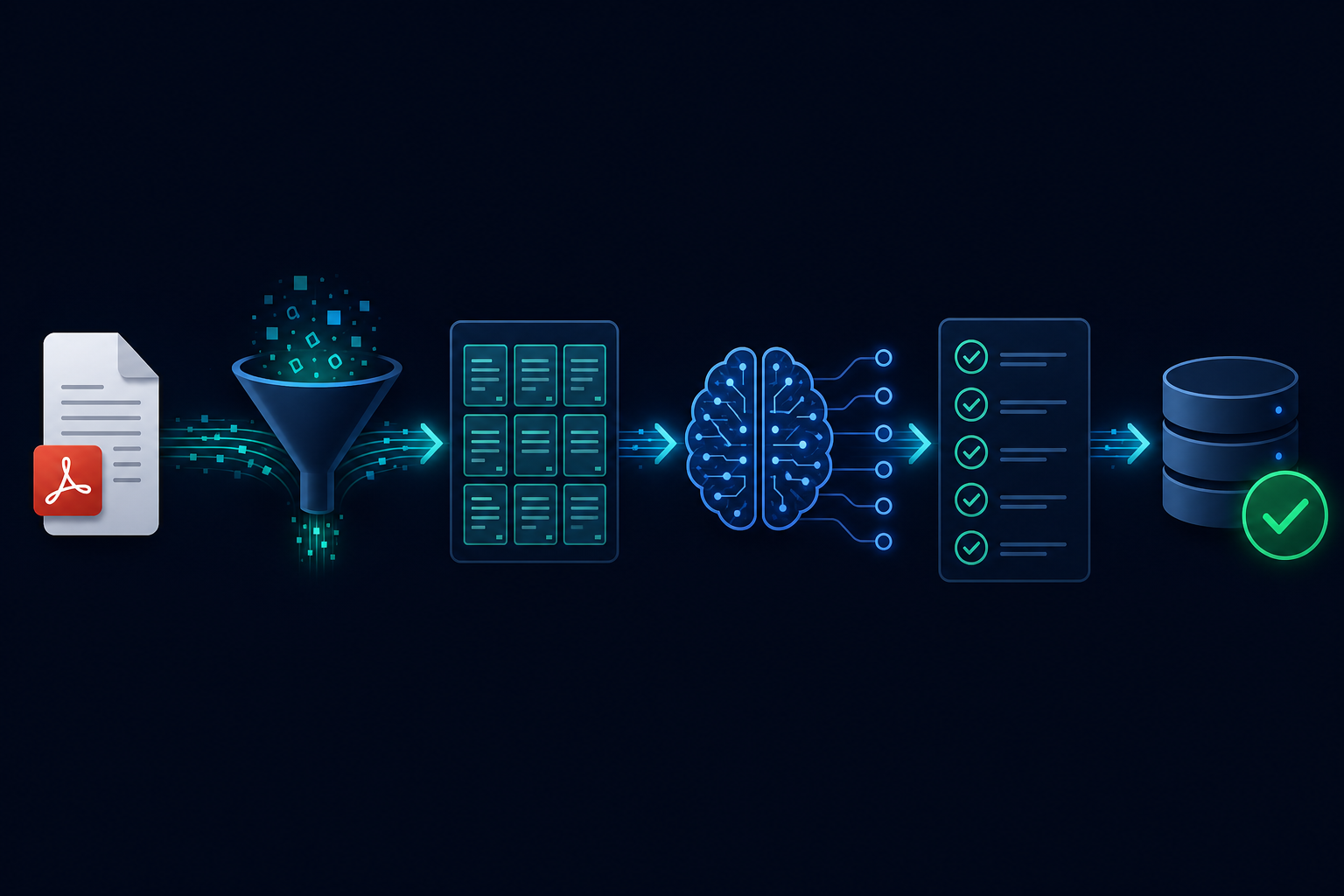

Building a Document Processing Pipeline with AgentRuntime

A five-stage production pipeline for processing documents with AI: ingestion, chunking, extraction, validation, and output routing — with the reliability patterns that matter at scale.

Context Window Management at Scale: What Breaks and How to Fix It

Larger context windows don't eliminate the need to manage context deliberately. The three failure modes and the strategies that fix them.

Graceful Degradation in AI Systems: When the Model Is Not Available

Circuit breakers, fallback strategies, and the failure spectrum for AI workflows — how to fail informatively and partially rather than completely.

Webhook Security for AI Workflows: What Most Teams Miss

Signature verification, replay attack prevention, and idempotency for webhook-triggered AI workflows — the four controls every handler needs.

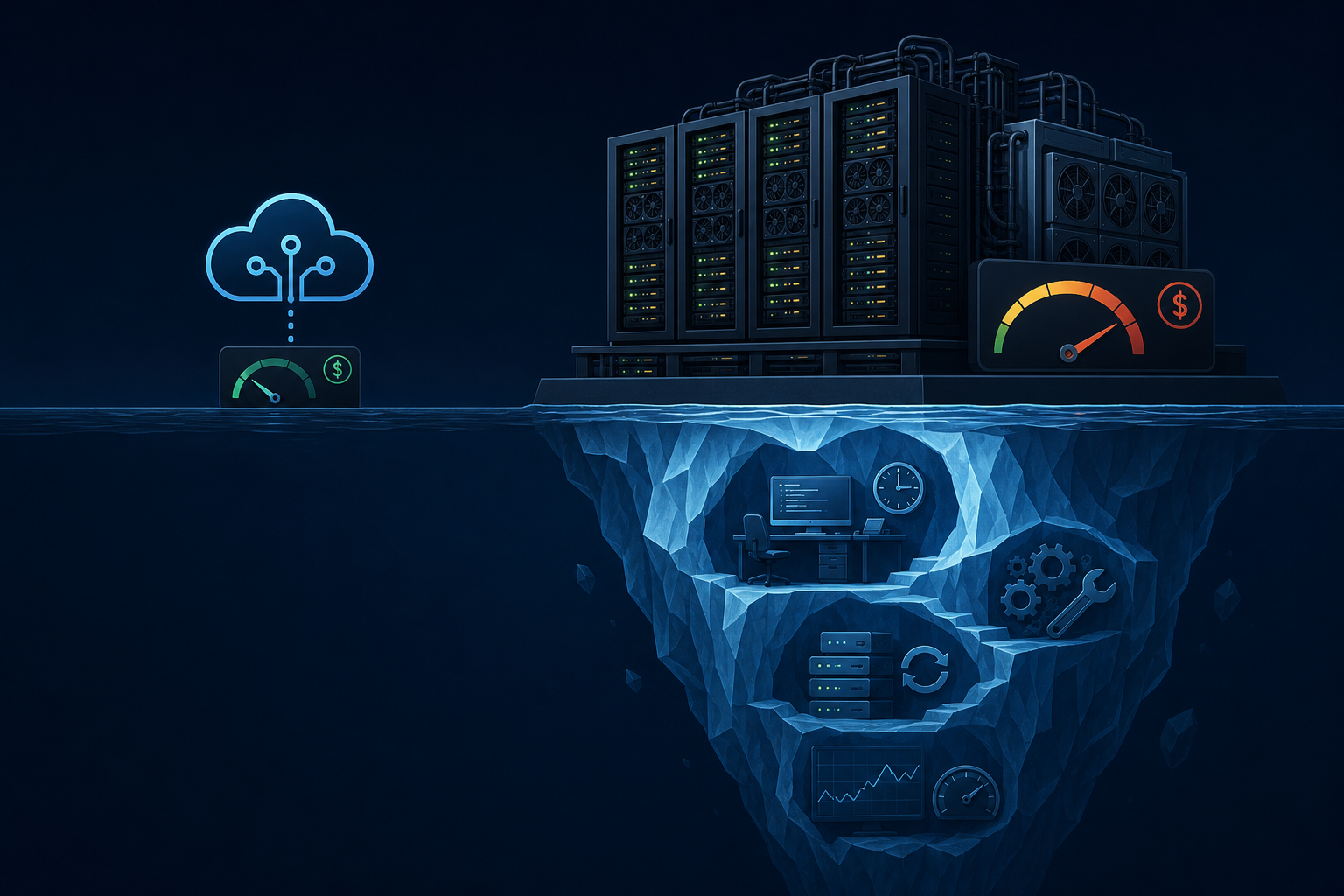

The Hidden Costs of Self-Hosting LLMs

GPU infrastructure, inference engineering, and model update overhead — the complete cost model most teams miss before deciding to self-host.

Building an AI Code Review Agent: What Actually Works

Why most code review bots get disabled and how to build one that gets adopted — narrow scope, confidence filtering, and a feedback loop.

When to Chain LLM Calls and When Not To

Chaining works for separation of concerns, not for hoping a model can handle complexity in pieces. When multi-step helps and when it hurts.

SLA Design for AI-Powered Products: Setting Expectations That Hold

Availability, latency, quality, and consistency — the four SLA dimensions for AI products, and why traditional uptime metrics are insufficient.

AI Agents for Compliance: Why Auditability Is the Whole Game

In compliance, the audit trail is the deliverable. What that means for AI workflow infrastructure: immutable records, policy versioning, and mandatory human review.

AgentRuntime vs. DIY Orchestration: What You Are Actually Building

An honest account of what production AI agent orchestration requires — and why DIY implementations accumulate hidden costs faster than most teams expect.

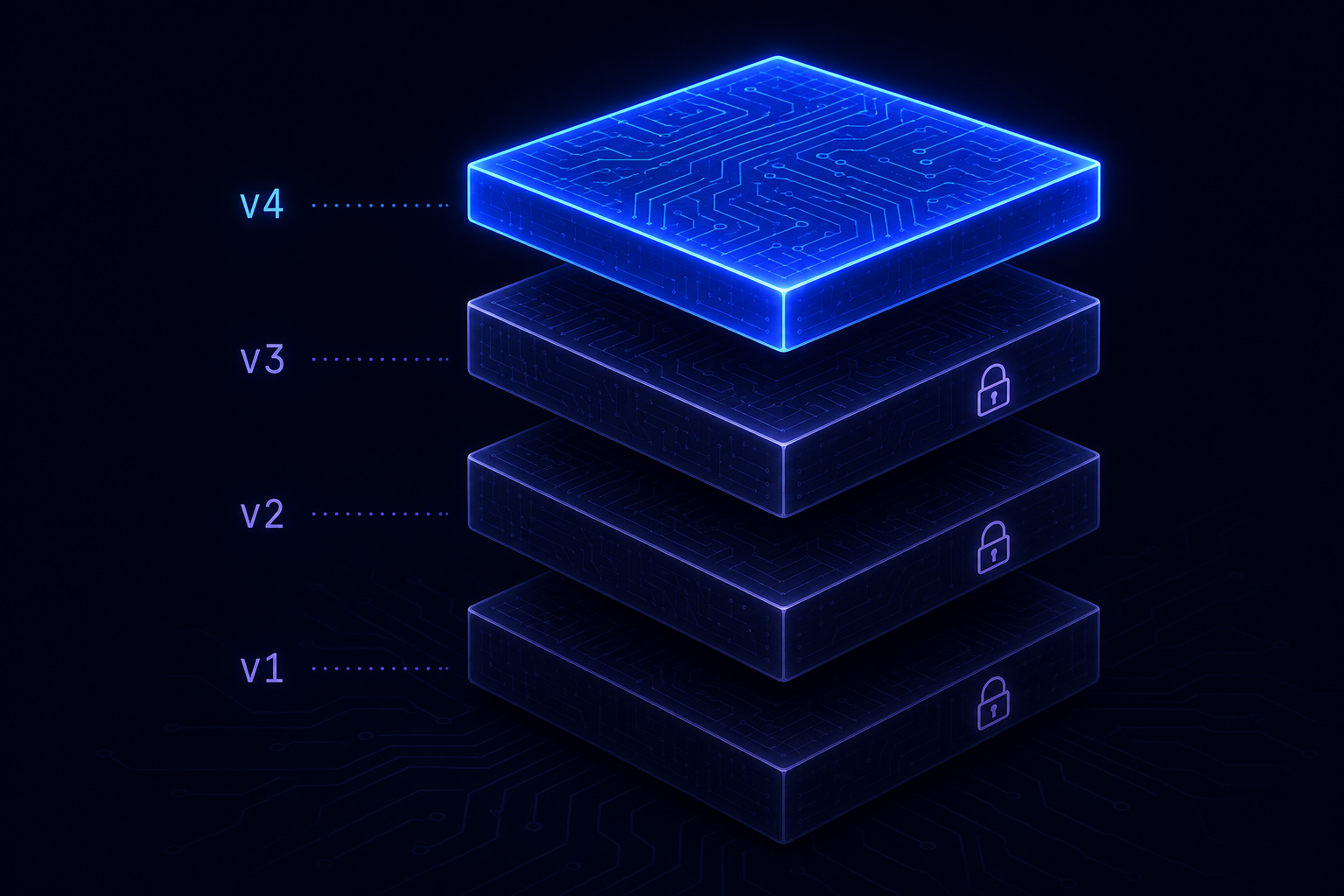

Versioning AI Workflows: Why Immutability Matters

Why mutable workflow definitions create debugging nightmares, compliance gaps, and rollback problems — and what immutable versioning looks like in practice.

Credential Management for AI Agents: Beyond Environment Variables

Why environment variables are the wrong answer for AI agent credentials — and the four properties of a production-grade secrets architecture.

Building a Customer Support Automation with AgentRuntime

A step-by-step walkthrough of a production customer support workflow: classification, CRM enrichment, LLM drafting, human review, and escalation.

Parallel Execution in AI Workflows: When to Fan Out and When Not To

The fan-out/fan-in pattern, nested runs for batch processing, failure handling strategies, and rate limit pitfalls for parallel AI workflows.

Multi-Tenant AI Infrastructure: Isolating Workflows Across Customers

What multi-tenancy means for AI workflow infrastructure, why naive implementations fail, and the three architectural decisions to get right from the start.

Observability for AI Agents: What to Trace and Why

The three layers of observability for AI workflows — run-level traces, step-level spans, and structured logs — and the questions each one lets you answer.

Simulate Before You Deploy: Why Pre-Flight Validation Saves Production Incidents

Schema validation, dependency checks, and graph linting for AI workflows — why simulation is the missing step between development and production.

Human-in-the-Loop: How to Build Approval Gates Into AI Workflows

Three HITL patterns for AI workflows — approve before irreversible action, review on threshold, and async audit — with the infrastructure they require.

What Is MCP and Why It Changes How AI Agents Use Tools

Model Context Protocol explained: what it is, why it was needed, and what native MCP support means for production agent infrastructure.

Why AI Agents Fail in Production (And What to Do About It)

The four infrastructure failure modes that break AI agents in production — and the patterns that fix them.

Introducing the AgentRuntime blog

Product updates, engineering notes, and practical guidance for running AI agents in production on AgentRuntime.